Google Just Published the Multi-Agent System I Built a Month Ago

Google Just Published the Multi-Agent System I Built a Month Ago

Google dropped a tutorial yesterday showing how to build a multi-agent blogging system using their Agent Development Kit. Three agents working together: Reddit Scanner finds trending topics, GCP Expert provides technical depth, Blog Drafter writes the final article.

I laughed when I read it. That’s BlogClaw. The same architecture I shipped in February.

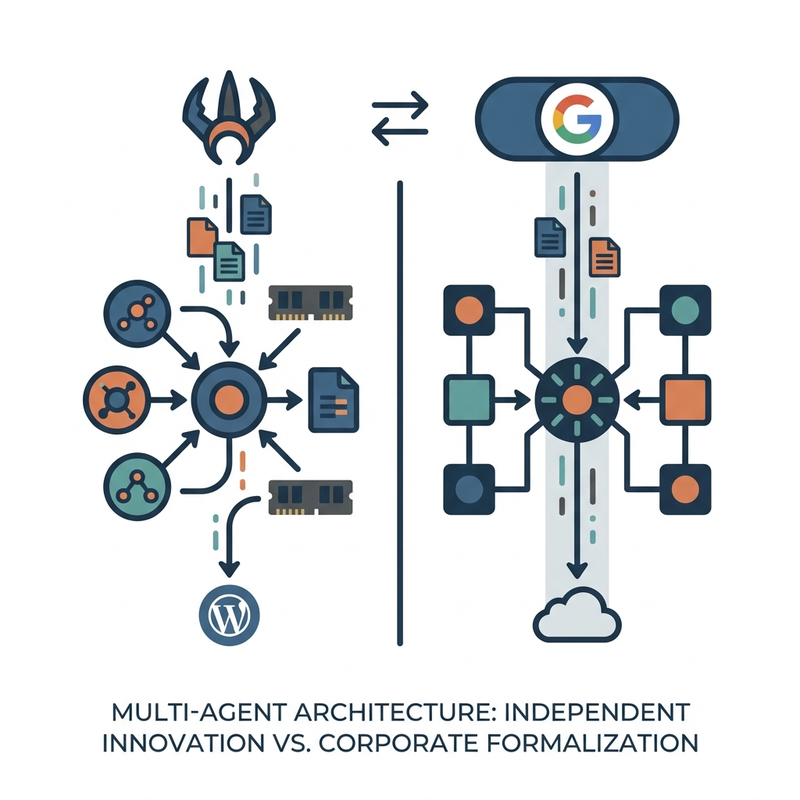

The Architecture Match

Google’s Dev Signal system has three specialized agents. Reddit Scanner monitors subreddits for trending discussions. GCP Expert pulls from documentation to add technical detail. Blog Drafter synthesizes everything into publishable content.

I built the same thing with different sources. One agent monitors my WordPress revisions to learn my editing patterns. Another analyzes what I publish versus what stays in drafts. A third generates content following the learned style rules.

Both systems use a root orchestrator. Google calls theirs the “Dev Signal Orchestrator.” I use scheduled task coordination. Same pattern, different implementations.

Both rely on memory systems. Google uses Vertex AI memory banks to persist context between runs. I use markdown files with YAML frontmatter tracking confidence scores and staleness metrics.

Why This Keeps Happening

This isn’t the first time a solo developer ships something before a big company formalizes it. It won’t be the last and I expect to see much more of this. I mean Claude is still copying OpenClaw and pulling its features back into its product.

I move faster. No committee approvals. No compliance review. No quarterly planning cycles. I see a problem, I build a solution, I ship it.

Google’s tutorial is great. It’s well documented, it shows best practices, it’ll help a lot of developers. But the architecture isn’t novel. People have been building multi-agent systems like this since Model Context Protocol standardized tool access.

The convergence is interesting though. When I independently arrive at the same architectural patterns as Google engineers, it suggests we’ve found something that actually works. Three specialized agents plus orchestration isn’t arbitrary. It’s the right abstraction for this problem.

What Google Added That I Skipped

Google’s version includes production-grade error handling I didn’t bother with. Retry logic, graceful degradation, proper logging. The stuff you need when you’re running at scale.

They also show how to deploy everything on Cloud Run with proper secrets management. BlogClaw runs on my personal VPS with environment variables in a .env file. Works fine for a few blogs. Wouldn’t scale to thousands.

The Vertex AI memory banks are more sophisticated than my file-based storage. Semantic search across conversation history, automatic context windowing, built-in staleness detection. I do the same things with grep and file timestamps. Functional but primitive.

The Real Lesson Here

You don’t need Google’s infrastructure to build autonomous systems. Model Context Protocol standardized the hard parts. Any LLM that supports tool use can coordinate multiple agents. The patterns are accessible to anyone.

What separates hobby projects from production systems isn’t the architecture. It’s error handling, monitoring, deployment automation, and scale considerations. Google’s tutorial excels at showing that part.

But the core insight remains: one person with clear requirements and no bureaucracy can ship working AI systems before large organizations finish their planning docs. The implementation is straightforward – Python scripts, REST APIs, no MCP wrappers. The architecture is what matters.

I built BlogClaw because I needed it. It analyzes how I edit blog posts, learns patterns, and improves draft quality over time. The fact that Google published essentially the same architecture a month later just confirms the approach works.

Where This Goes Next

Google’s formalizing multi-agent patterns makes the whole space more legitimate. More developers will build these systems. Better tooling will emerge. The MCP ecosystem will expand.

I’ll keep improving BlogClaw. It’s adding lexical analysis (comparing word choice in published versus rejected drafts) and structural pattern detection (what article formats I actually publish). The same multi-agent coordination, just getting smarter about what it learns.

The convergence between indie projects and big company tutorials isn’t competition. It’s validation that we’re solving real problems with architectures that make sense.

Build what you need. Ship it. If Google publishes the same thing later, you were just early.

—

Source: Google Cloud Blog – Build a Multi-Agent System for Expert Content

Related: Self-Improving Blog System: How BlogClaw Learns From WordPress Edits