Nanoclaw Ranking Paradox

When the Creator Can’t Rank: The Nanoclaw Paradox and Google’s Broken Link Graph

Fast forward to 2026, and we’re witnessing something I’ve been warning about since the 2018 Medic update: the link graph doesn’t work the way it used to. Maybe it doesn’t work at all. Google has been incredibly coy with how they have rolled out these changes out since then, heard of EAT, heard of brand bias? Hear of links being watered down? Yea, probably not.

Enter: The Nanoclaw Paradox.

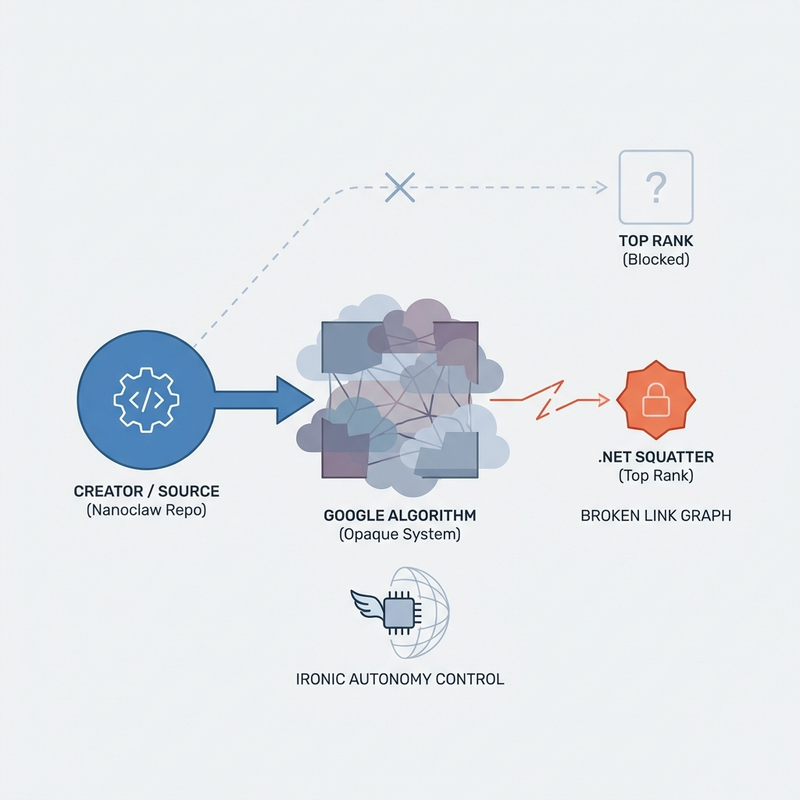

@Gavriel_Cohen, the creator of Nanoclaw – an AI agent framework getting serious traction on GitHub – can’t rank for his own product name. A random .net domain squats on the top spot instead. Despite having the authoritative GitHub repository, the actual documentation, the real project… Google says “nah, we’re going with the domain that has ‘nanoclaw’ in the URL.”

This isn’t a bug. This is the system working exactly as designed – which is to say, it’s fundamentally unpredictable.

The Old Math vs. The New Math

Pre-2018, this scenario would have been impossible. The link graph had clear signals:

- GitHub repo with active commits? Authority signal.

- Documentation linked from the official repo? Stronger authority.

- Community discussions pointing to the real project? Social proof.

- The actual creator actively maintaining the project? Human verification.

The math was calculable. You could predict outcomes.

Post-Medic update, Google washes hundreds of datapoints through AI-powered ranking systems. The link graph became one input among many – and apparently not a very weighted one. Now we get outcomes like this: the person who built the thing can’t rank for the thing they built.

I look at everything as math, even if I don’t understand it. But this math? It’s not just opaque – it’s apparently random.

The Irony Runs Deep

What makes this particularly poignant is what Nanoclaw actually does: it’s an AI agent framework designed to give you more control and autonomy in how you use AI systems.

The creator built a tool to escape algorithmic control… and can’t escape Google’s algorithm controlling his own project’s visibility.

You can’t make this up.

This Is Why I Stopped Promising Ranking Results

Several trends emerge when you’ve been in SEO for 20+ years:

First, the people parroting Google’s messaging about “quality content” and “helpful, people-first content” are missing the point. Gavriel’s content IS helpful. It IS authoritative. It IS exactly what searchers need. And it doesn’t rank.

Second, the advice to “just build great content and links will follow” ignores the reality that the link graph itself is broken. GitHub links SHOULD be authoritative. They’re not being treated that way.

Third, the industry keeps selling SEO as if it’s calculable – like media spend where you can predict ROI. It’s not. It’s a black box with a fair bit of roulette thrown in.

Google’s own lawyers have framed modern search ranking as inherently unpredictable. Not because they’re being coy – because that’s actually how the system works now. There’s no single optimization point you can target. It’s like going to a movie theater where the same film shows differently every time you watch it.

Where Do We Go From Here?

If you’re building something real – a product, a service, a community – here’s my honest advice:

Don’t invest in SEO the same way you’d invest in calculable channels. The math doesn’t work that way anymore. It’s not like media spend across networks where you can measure inputs and predict outputs.

Do test and experiment independently. Don’t just follow what other businesses say works. Your mileage will vary wildly. What ranks today might not rank tomorrow. What worked for them might not work for you.

Do diversify your audience ownership. Email lists. Direct relationships. Community platforms. Social media presence. Discord. RSS feeds if you’re feeling retro. Anywhere you can reach your audience without Google as the middleman.

Do maintain realistic expectations. SEO is a non-exact science. A bit of a clown show, honestly. Google’s legal team has said as much. Plan accordingly.

The Nanoclaw Paradox isn’t an edge case. It’s a warning sign.

Build great things. Document them well and consider order in your planning. But don’t expect the search engines to care who actually created what. That’s not how the math works anymore – if it ever really did.

—

Want updates on search evolution and off-network audience building? I write about the intersection of SEO realism, AI agents, and audience ownership at brianchappell.com.